Gemma 4 Wiki

Track Gemma 4 model sizes, benchmarks, prompting, function calling, multimodal input, local deployment, and fine-tuning across the official Google ecosystem.

Latest Updates

Discover the newest guides, tips, and content

gemma 4 cloud: Local-First Setup and Gaming Workflow Guide 2026

Learn how to use gemma 4 cloud workflows for gaming tasks, modding help, and offline AI coding with practical setup steps and trade-off analysis.

Gemma 4 on Mac: Complete Local Setup, Tuning, and Use Guide 2026

Learn how to install, run, and optimize Gemma 4 on Mac in 2026 with practical model picks, performance tips, and troubleshooting steps.

gemma 4 license: Creator, Modding, and Commercial Use Guide 2026

Learn how the gemma 4 license affects game studios, modders, and content creators in 2026, with practical compliance checklists and deployment tips.

gemma 4 api: Complete Setup and Optimization Guide for Creators 2026

Learn how to set up, test, and optimize gemma 4 api for game workflows, AI NPCs, mod tools, and multimodal pipelines in 2026.

gemma 4 cli: Local AI Setup and Game Dev Workflow Guide 2026

Learn how to install, configure, and optimize gemma 4 cli for game writing, coding, and live design workflows in 2026.

Gemma 4 Coding: Complete Local VS Code Setup and Workflow Guide 2026

Learn how to run Gemma 4 locally for coding inside VS Code with Ollama and Continue. Includes setup steps, permission tuning, performance expectations, and troubleshooting for 2026.

gemma 4 fine tune: No-Code Unsloth Studio Workflow Tutorial 2026

Learn a practical gemma 4 fine tune workflow with Unsloth Studio, from GPU setup and dataset mapping to export and evaluation in 2026.

gemma 4 local: Offline AI Setup and Gaming Workflow Guide 2026

Learn how to run Gemma 4 on your own PC for private, offline gaming tasks like mod planning, walkthrough drafting, and coding help in 2026.

gemma 4 function calling: Mobile Game Command Systems Guide 2026

Build fast on-device game actions with gemma 4 function calling patterns, tool schemas, tuning workflows, and QA steps for production in 2026.

Gemma 4 Agent: Offline AI Setup and Gamer Workflow Guide 2026

Learn how to set up a Gemma 4 agent locally for gaming workflows, modding support, log analysis, and offline AI assistance in 2026.

Gemma 4 API Pricing: Cost Breakdown for Game Dev Teams in 2026

A practical 2026 guide to Gemma 4 API pricing, including local vs hosted costs, budgeting formulas, and deployment choices for gaming studios.

Gemma 4 SWE benchmark: Model Picks, Performance, and Setup Guide 2026

A practical 2026 guide to the Gemma 4 SWE benchmark, including model tiers, hardware targets, coding performance, and local setup tips.

Ollama MLX Gemma4: Complete Local AI Setup and Tuning Guide 2026

Learn how to run Ollama MLX Gemma4 locally for gaming workflows, modding support, image analysis, and fast multimodal prompts in 2026.

gemma 4 swe bench pro: Practical Performance Guide for Dev Teams 2026

A hands-on 2026 guide to evaluating Gemma 4 for SWE-bench Pro style workflows, local coding agents, and gaming studio development pipelines.

gemma 4 31b required vram: Practical GPU Memory Guide 2026

Find out how much VRAM Gemma 4 31B really needs across 4-bit, 6-bit, and 8-bit setups, plus context, speed, and offload tips for local use in 2026.

gemma 4 31b benchmark coding: Performance Guide for Game Dev Teams 2026

A practical 2026 guide to gemma 4 31b benchmark coding for game studios, with benchmark context, hardware planning, workflow setup, and coding task strategies.

gemma 4 benchmark scores: Full Model Comparison and Hardware Guide 2026

A practical breakdown of gemma 4 benchmark scores, model rankings, VRAM needs, and setup tips to choose the right Gemma 4 version in 2026.

Gemma4 Transformers: Local Setup, Tuning, and Workflow Guide 2026

Learn how to run Gemma4 Transformers locally for private, offline AI workflows. Includes setup steps, model sizing, tuning tips, and practical use cases for creators.

gemma 4 chat template: OpenCode Setup, Fixes, and Workflow Guide 2026

Learn how to configure, debug, and optimize the gemma 4 chat template for tool-calling workflows in 2026, including OpenCode and Claude Code style harnesses.

gemma 4 26b mlx apple silicon: Setup, Benchmarks, and Mac Guide 2026

Learn how to run Gemma 4 26B with MLX on Apple Silicon Macs, including install steps, performance tuning, VRAM planning, and practical creator workflows in 2026.

Gemma4 tool calling Ollama: Practical Setup, Prompts, and Workflow Guide 2026

Learn how to implement Gemma4 tool calling Ollama workflows with model selection, function schemas, prompt patterns, debugging steps, and performance tuning for local AI apps.

gemma 4 awq: Local AI Setup and Gamer Workflow Guide 2026

Learn how to use gemma 4 awq for local, private, and offline gaming workflows on PC and phone, including hardware picks, settings, and practical optimization tips.

gemma 4 vllm support: Complete Setup, Benchmarks, and Fixes 2026

Learn how to enable gemma 4 vllm support for fast, scalable inference in gaming workflows, from local testing to production deployment.

gemma 4 docker: Complete Local Setup, Benchmarks, and Workflow Guide 2026

Learn how to run Gemma 4 in Docker for private, fast local AI workflows. Includes setup steps, performance tuning, troubleshooting, and practical game dev use cases.

Gemma4 31B requirements: Local Hardware and Setup Guide 2026

A practical breakdown of Gemma4 31B requirements, including VRAM, RAM, storage, context length, and a step-by-step local deployment checklist for 2026.

Gemma 4 INT4: Local AI Setup and Gaming Workflow Guide for Creators 2026

Learn how to run Gemma 4 INT4 locally for gaming workflows, from hardware planning and install steps to performance tuning and practical creator use cases in 2026.

Gemma4 Quantization: Best Performance and Quality Settings Guide 2026

Learn how to tune Gemma4 quantization for better FPS-friendly workflows, lower VRAM usage, and strong output quality on everyday gaming PCs in 2026.

gemma 4 26b gguf: Local Gaming Prototype Guide and Benchmarks 2026

Learn how to run Gemma 4 26B GGUF locally for game prototyping, compare quantizations, tune performance, and build better browser-based game demos in 2026.

Gemma 4 Bartowski: Best Local AI Setup for Gaming Workflows 2026

Learn how to use Gemma 4 Bartowski style local models for gaming tasks, from quest planning to translation, NPC dialogue prototyping, and performance tuning in 2026.

Gemma 4 Audio: Practical Setup, Limits, and Gaming Workflows 2026

Learn what Gemma 4 audio support includes, what it does not, and how to build a reliable voice workflow for game mods, NPC tools, and creator pipelines in 2026.

Gemma 4 Resources

Everything you need to get started with Gemma 4 — from local setup to API integration

Gemma 4 Tutorial

Gemma 4 launched on April 2, 2026 in four official sizes: E2B, E4B, 26B A4B, and 31B. The family is built for open-weight deployment under Apache 2.0, with smaller edge models aimed at mobile and laptop-class hardware and larger models aimed at desktops, workstations, and servers.

Understand the four official Gemma 4 sizes

Gemma 4 comes in E2B, E4B, 26B A4B, and 31B. E2B and E4B accept text, image, and audio input; 26B A4B and 31B accept text and image input and target larger local or server deployments.

Match the model to your hardware

Use E2B or E4B when you want mobile, edge, or laptop-friendly local inference. Use 26B A4B for a stronger general-purpose local model, and 31B when you want the largest official Gemma 4 checkpoint.

Choose a starting point

Gemma 4 26B A4B is a strong default for powerful first experiences. If you want the lightest starting point, begin with an instruction-tuned edge model and move up when your workload needs more capability.

Pick how you want to try it

Try hosted Gemma 4 through Google AI Studio and the Gemini API, or download open weights from Hugging Face or Kaggle for local use, tuning, and custom deployment.

Know what Gemma 4 is optimized for

The family is built for reasoning, coding, agentic workflows, and multimodal understanding. Edge models support 128K context, while 26B A4B and 31B support up to 256K context.

Quick Tips

- Instruction-tuned (-it) variants are best for chat and assistant use cases.

- E2B and E4B are the most hardware-accessible starting points for local experimentation.

- The 26B A4B is a Mixture-of-Experts model with faster effective inference than a dense model of similar total size.

- All Gemma 4 weights are released under the Apache 2.0 license.

Gemma 4 Ollama Setup

Ollama is one of the fastest ways to get Gemma 4 running on a laptop or workstation. The default Ollama flow is simple: install Ollama, pull Gemma 4, confirm the model list, choose the right tag for your hardware, and then run from the CLI or local API.

Install and verify Ollama

Download Ollama for Windows, macOS, or Linux, install it, and verify the setup with the command ollama --version.

Pull the default Gemma 4 variant

Use ollama pull gemma4 to download the default Gemma 4 package, then run ollama list to confirm it is available locally.

Choose the right model tag

Use gemma4:e2b for the lightest edge option, gemma4:e4b for a stronger edge default, gemma4:26b for the 26B A4B MoE workstation model, and gemma4:31b for the full large model.

Know what each tag expects

On the Ollama library page, e2b is listed at 7.2GB with 128K context, e4b at 9.6GB with 128K, 26b at 18GB with 256K, and 31b at 20GB with 256K.

Run your first prompt

For a first text test, run ollama run gemma4 "Hello, what can you do?". Ollama also supports image input with the prompt form shown in the official guide.

Use the local API for app integration

Ollama exposes a local web service at http://localhost:11434/api/generate, so you can move from CLI testing to a lightweight local application without setting up a separate model server.

Quick Tips

- E2B and E4B are the practical first picks for local experimentation on lighter hardware.

- The 26b tag targets the 26B A4B MoE model, which uses less active compute than a dense model of similar total size.

- ollama list shows all locally downloaded models and their sizes.

- Ollama supports image input with the prompt form: ollama run gemma4:e2b with an image path.

Gemma 4 API Guide

The Gemini API provides hosted access to Gemma 4, useful when building without managing local inference. The hosted Gemma 4 models in AI Studio and the Gemini API are gemma-4-26b-a4b-it and gemma-4-31b-it.

Create an API key in Google AI Studio

Open Google AI Studio and create a Gemini API key. New users can start with a default Google Cloud project, while existing users can import a Cloud project and create keys there.

Set the key in your environment

The Gemini SDKs automatically pick up GEMINI_API_KEY or GOOGLE_API_KEY. If both are set, GOOGLE_API_KEY takes precedence.

Install the official SDK

For Python, install google-genai. For JavaScript and TypeScript, install @google/genai. Google also publishes SDK paths for Go, Java, C#, and Apps Script.

Choose the hosted Gemma 4 model ID

For hosted Gemma 4, use gemma-4-26b-a4b-it for a faster MoE large model, or gemma-4-31b-it for the flagship dense checkpoint.

Send a first generateContent request

The official example uses client.models.generate_content with the model field set to gemma-4-31b-it. In REST, requests go to the generateContent endpoint with the x-goog-api-key header.

Use AI Studio to bridge from testing to code

Google AI Studio lets you experiment with prompts, model settings, function calling, and structured output, then export working code through the Get code flow.

Quick Tips

- AI Studio is the fastest way to test Gemma 4 prompts before writing any code.

- The Gemini API supports streaming responses for chat and long-generation use cases.

- gemma-4-26b-a4b-it is the MoE model — generally faster and more cost-efficient than 31B.

- Function calling and structured output are available for both hosted Gemma 4 model IDs.

Gemma 4 Hugging Face Download

The official Google collection on Hugging Face includes eight core Gemma 4 checkpoints: E2B, E4B, 26B A4B, and 31B, each in base and instruction-tuned form. Instruction-tuned (-it) repositories are the natural starting point for chat, coding, and assistant experiences.

google/gemma-4-E2B-it

Edge checkpoint with text, image, and audio input and 128K context. Best for fast local assistants and on-device multimodal experimentation.

google/gemma-4-E4B-it

Stronger edge checkpoint with text, image, and audio input and 128K context. More capable than E2B without jumping to workstation-class hardware.

google/gemma-4-26B-A4B-it

Mixture-of-Experts checkpoint with 256K context and text-image input. Large-model quality with faster effective inference than a dense model of similar total size.

google/gemma-4-31B-it

Flagship dense Gemma 4 checkpoint with 256K context and text-image input. Best for the strongest chat, reasoning, coding, and agent workflows.

google/gemma-4-E2B

Base edge checkpoint for users who want to study, adapt, or fine-tune the smallest multimodal Gemma 4 model.

google/gemma-4-E4B

Base edge checkpoint that keeps text, image, and audio input while leaving downstream instruction behavior to your own tuning pipeline.

google/gemma-4-26B-A4B

Base MoE large checkpoint for custom adaptation where you want the 26B A4B architecture without default instruction-tuned behavior.

google/gemma-4-31B

Base 31B dense checkpoint for teams that want the largest official Gemma 4 foundation model before their own fine-tuning or alignment stage.

Choose the Right Gemma 4 Size for Your Hardware

Gemma 4 ships in four sizes with very different trade-offs. The fastest choice is not always the smallest model, and the highest-quality choice is not always the easiest one to deploy.

Gemma 4 is available in two edge-first dense models, one efficient Mixture-of-Experts model, and one large dense model. For most teams, the real decision is not just quality, but where the model runs: phone, laptop, workstation, or server. A practical starting point is 26B A4B when you want strong quality without jumping all the way to 31B.

Gemma 4 E2B

Offline assistants, lightweight multimodal apps, edge deployment

Gemma 4 E4B

Stronger local copilots, on-device reasoning, multimodal apps with more headroom

Gemma 4 26B A4B

Best balance of quality, speed, and long-context work for most teams

Gemma 4 31B

Highest-end reasoning, coding, and multimodal quality in the Gemma 4 family

The Gemma 4 Specs That Actually Matter Before You Build

For most builders, the key questions are context length, modalities, language coverage, licensing, and app-level features. These are the specs that change implementation choices, hosting cost, and product scope.

Gemma 4 is not just a text model refresh. The family combines long context, multimodal input, thinking mode, native system prompts, and function-calling support in one open-weight lineup. The smaller models add audio input, while the larger models extend context to 256K for document-heavy and repository-scale workloads.

March 31, 2026

This is the current Gemma core generation and the one Google now highlights across docs and launch materials.

All models: text and image → text; E2B and E4B also support audio input

You can build text-only, vision, and lightweight speech understanding flows without switching model families.

128K tokens on E2B and E4B; 256K tokens on 26B A4B and 31B

Large prompts such as long documents, long chats, or multi-file code context fit in a single request.

Over 140 languages

This matters for multilingual products, OCR, and globally deployed assistants.

Apache 2.0 license with open weights and support for responsible commercial use

You can tune, deploy, and run Gemma 4 in your own stack with fewer licensing constraints.

Configurable thinking mode, native system role support, structured JSON output, and function calling

These features make Gemma 4 much easier to use for agents, tool use, and instruction-heavy applications.

Variable image resolutions and token budgets of 70, 140, 280, 560, or 1120 tokens

You can trade image detail for speed depending on whether the task is OCR, UI reading, chart analysis, or fast frame processing.

Official Gemma 4 Benchmark Snapshot

These scores show where each Gemma 4 size is strongest across reasoning, coding, science, vision, and long-context retrieval. Use them to shortlist a model quickly, then match that shortlist to your latency and memory budget.

Gemma 4 is positioned as a model family for reasoning, agentic workflows, coding, and multimodal understanding. The official benchmark tables show a clear pattern: 31B leads, 26B A4B stays surprisingly close while being much more efficient, and E4B and E2B bring meaningful capability to smaller devices.

MMLU Pro

Knowledge and reasoning

Best quick comparison for general high-level reasoning performance across the family.

AIME 2026 (no tools)

Math reasoning

31B and 26B A4B are the right targets for math-heavy assistants and planning tasks.

LiveCodeBench v6

Competitive coding

If coding is a primary use case, the larger two models are in a different tier from the edge models.

GPQA Diamond

Scientific reasoning

A strong signal for technical and expert-facing workflows.

MMMU Pro

Multimodal reasoning

Vision tasks benefit heavily from the larger models when accuracy matters more than footprint.

MRCR v2 (128K, 8-needle)

Long-context retrieval

For large-document and repository-scale prompting, 31B is the strongest long-context choice.

How to Fine-Tune Gemma 4 for Real Product Work

Fine-tuning matters when prompting alone is not enough and you want Gemma 4 to perform better on a specific domain, workflow, or role. The practical paths are lightweight adapter tuning for text tasks and multimodal adapter tuning for image-plus-text tasks.

The official Gemma tuning docs center on a simple rule: tune for a defined task, not for vague improvement. For many builders, QLoRA is the most realistic place to start because it keeps hardware requirements much lower than full-model tuning.

Start with a narrow tuning goal

Choose a task or role that the base model should perform better, such as customer support, text-to-SQL, or product description generation. Use fine-tuning when the task is specific and repeated.

Pick the tuning path

Use text tuning for instruction and generation tasks, or vision tuning when your dataset combines images and text. The text QLoRA guide demonstrates text-to-SQL; the vision QLoRA guide demonstrates image-plus-text product descriptions.

Choose a realistic framework

Gemma 4 supports Keras with LoRA, the Gemma library, Hugging Face-based workflows, GKE, and Vertex AI. Hugging Face plus TRL is the most direct path for many developers.

Match the workflow to your hardware

The official text QLoRA example is designed around a T4 16GB setup. The vision QLoRA guide calls for a BF16-capable GPU such as NVIDIA L4 or A100 with more than 16GB of memory.

Use QLoRA when efficiency matters

QLoRA keeps the base model quantized to 4-bit, freezes the original weights, and trains only the added LoRA adapters. This lowers memory usage while preserving strong task performance.

Prepare data in the right format

Build a dataset that directly matches the behavior you want, then format it for conversation-style training with TRL and SFTTrainer. The official text guide uses a large synthetic text-to-SQL dataset.

Evaluate, compare, and deploy

After training, run inference checks against your base model, verify task gains, and then deploy the tuned model or adapter. Treat deployment format as an early decision because framework choice affects the output format you get.

Quick Tips

- Start with QLoRA and a T4-class GPU for text tasks — full fine-tuning is rarely needed for task adaptation.

- Format your dataset to mirror the instruction-tuned chat format that Gemma 4 already understands.

- Keep your eval set from the same distribution as your training data to get meaningful improvement signals.

- The MoE model 26B A4B has efficient active parameters, but its total parameter count still affects checkpoint size during training.

- Use the Gemma 4 -it checkpoint as your starting point for instruction tasks rather than the pre-trained base.

Gemma 4 Prompt Guide

Gemma 4 introduces a new turn-based prompt format with native system instructions, multimodal placeholders, and built-in controls for thinking and tool use.

This guide turns the official Gemma 4 format into a practical prompt library. Structure every interaction as turns, use the system role for behavior and global rules, insert image or audio placeholders where needed, and only enable thinking or tool use when the task actually benefits from them.

Core chat skeleton

Gemma 4 uses native system, user, and model roles, wrapped in turn markers.

- Use system for global instructions

- Use user for the current request

- Use model as the generation start point

System prompt pattern

Put stable behavior rules in one system turn instead of repeating them every time.

- Good for style, scope, and output format

- Native system role support starts with Gemma 4

- Keep it concise and task-specific

Multimodal placeholders

Use placeholder tokens to indicate where image and audio embeddings should be inserted.

- Use <|image|> for images

- Use <|audio|> for audio

- The processor replaces placeholders with embeddings after tokenization

Thinking-ready prompt

Thinking mode is activated by placing <|think|> inside the system instruction.

- Enable it for reasoning-heavy tasks

- Keep it off for simple direct generation

- Use one system turn for both thinking and other global instructions

Tool-aware prompt structure

Tool declarations belong in the system turn, and tool calls and tool responses are handled with dedicated control tokens.

- Useful for APIs, search, calculators, and external data lookups

- Tool use is structured, not plain-text pretending

- Reasoning and tool use can happen in the same turn

Gemma 4 Thinking Mode

Thinking mode lets Gemma 4 produce a reasoning channel before the final answer, and the processor can separate both parts for application use.

Thinking mode is best for tasks where the model benefits from intermediate reasoning before it answers: ambiguous questions, math, coding, tool planning, and multimodal analysis. In Gemma 4, you can enable it at the chat-template level, stream the reasoning live, and then split the output into a thinking block and a user-facing answer block.

Choose the right tasks

Use thinking mode when the request needs decomposition, comparison, planning, or careful interpretation rather than a short direct reply.

- Good fits: math, code debugging, structured decision-making, image-plus-text reasoning

- Less necessary for simple rewrites, short summaries, or straightforward facts

- Official examples cover both text-only and image-text workflows

Enable thinking in the chat template

With Hugging Face Transformers, set enable_thinking=True in apply_chat_template(). At the token level, Gemma 4 uses <|think|> in the system turn.

- E2B and E4B: thinking OFF uses a simple user-model flow; thinking ON adds a system turn with <|think|>

- 26B A4B and 31B: official templates include an empty thinking token when thinking is off to stabilize output

- Thinking is designed to be enabled at the conversation level

Generate and separate the result

The model can emit a reasoning channel first and the final answer after it. You can stream it with TextStreamer and split it with parse_response().

- processor.parse_response() returns separated thinking and answer content

- This works for text prompts and image-text prompts

- The reasoning channel can also include tool calls when the turn becomes agentic

Handle multi-turn chats correctly

For normal multi-turn conversations, strip the previous turn generated thoughts before sending the history back. In tool-calling turns, keep the thought flow intact until the tool cycle finishes.

- Regular chat: remove prior thought blocks before the next turn

- Tool-use exception: do not remove thoughts between function calls inside the same turn

- This keeps context clean while preserving agentic behavior

Gemma 4 Function Calling

Gemma 4 supports native structured tool use, letting the model request functions instead of faking external actions in plain text.

Function calling is the practical bridge between model output and real application behavior. Instead of asking Gemma 4 to guess live data or simulate actions, you define tools, let the model generate a structured call, execute the function in your app, and then feed the result back so the model can finish with a clean natural-language answer.

Define tools clearly

Pass tools through apply_chat_template() using either a manual JSON schema or a raw Python function converted to schema.

- Manual JSON schema is best when you need precise nested parameters

- Raw Python functions are convenient for simple tools with clear type hints and docstrings

- Tool definitions should include name, description, parameter types, and required fields

Let the model request a tool

Gemma 4 receives the user prompt plus available tools and returns a structured function call object rather than plain text when a tool is needed.

- Tool use is controlled with dedicated tokens such as tool, tool_call, and tool_response

- A typical example is a weather or search function

- This is better than plain text when the answer depends on external state or system actions

Validate and execute in your app

Gemma 4 cannot execute code on its own. Your application must parse the function name and arguments, validate them, and run the real function safely.

- Always validate function names and arguments before execution

- Do not rely on generated code without safeguards

- For production systems, map tool names to approved handlers instead of dynamic execution

Return tool output for the final answer

Append the tool result back into the chat history, then let Gemma 4 produce the final user-facing response.

- Official workflow: define tools, model turn, developer turn, final response

- This pattern works for APIs, live lookups, calculators, settings updates, and agent loops

- Tool responses should stay structured so the model can ground the final answer correctly

Gemma 4 Multimodal Guide

Gemma 4 handles text and image across all models, supports video as frames, and adds native audio support on E2B and E4B.

Gemma 4 is built for multimodal input. All models support image and video-style visual understanding, the small models add audio input, and the runtime lets you trade off visual detail against speed using token budgets. That makes Gemma 4 suitable for OCR, captioning, object detection, speech tasks, and mixed media prompts inside one chat flow.

Image understanding

All Gemma 4 models support text-plus-image workflows.

- Common tasks: OCR, object detection, visual question answering, image captioning

- Supports reasoning across multiple images in one prompt

- Best for screenshots, documents, product images, and scene analysis

Video understanding

All Gemma 4 models can process video as a sequence of frames.

- Good for scene description, human interaction, and situational summaries

- Video passed as a content item in the messages array

- Maximum supported video length is 60 seconds at 1 frame per second

Audio understanding

Audio is available on the E2B and E4B models.

- Supports multilingual speech recognition, speech translation, and general speech understanding

- Audio token cost is 25 tokens per second

- Maximum audio length is 30 seconds

Visual token budgets

Gemma 4 introduces variable-resolution image processing so you can choose speed or detail based on the task.

- Supported image budgets: 70, 140, 280, 560, 1120 tokens

- Lower budgets for faster classification, captioning, and video frame analysis

- Higher budgets for OCR, document parsing, and reading small text

Input preparation rules

The processor handles much of the media formatting, but a few limits matter in production.

- Audio should be mono, 16 kHz, float32, normalized to [-1, 1]

- Image file support depends on the framework used to convert files into tensors

- Prompt quality still matters: specific instructions outperform vague multimodal requests

Model capability split

Use the smallest models for mobile and speech-heavy use cases, and the larger models for heavier reasoning with long context.

- E2B and E4B: audio-enabled small models with 128K context

- 26B A4B and 31B: larger reasoning-focused models with 256K context

- All four official sizes available in base and instruction-tuned variants

Gemma 4 GGUF and Quantization

Choose the smallest Gemma 4 footprint that still fits your machine

For most local setups, the practical decision is whether to stay with E2B or E4B, or move up to a 26B A4B GGUF build. Google documents approximate memory needs for BF16, SFP8, and 4-bit-style deployment choices across all four official sizes.

Official local entry points

Google's Ollama guide exposes four Gemma 4 tags: gemma4:e2b, gemma4:e4b, gemma4:26b, and gemma4:31b. LM Studio also supports Gemma models in both GGUF and MLX formats for fully local inference.

Start with E2B or E4B for a lighter local loop, and move to 26B or 31B only when you have the RAM budget and want a stronger reasoning model.

Approximate memory by official size

Google lists approximate inference memory as E2B 9.6 GB BF16 / 3.2 GB Q4_0, E4B 15 GB / 5 GB, 26B A4B 48 GB / 15.6 GB, and 31B 58.3 GB / 17.4 GB.

If your target is a mainstream local machine, 4-bit-style deployment or a smaller model size is usually the line between runnable and impractical.

Official 26B A4B GGUF example

The official ggml-org Gemma 4 26B A4B IT GGUF page recommends llama-server for startup and lists Q4_K_M at 16.8 GB, Q8_0 at 26.9 GB, and F16 at 50.5 GB.

Q4_K_M is the most practical default when you want a large local Gemma 4 model but cannot afford Q8_0 or full 16-bit memory use.

What quantization changes

Higher parameter counts and higher precision are generally more capable, but they cost more processing cycles, memory, and power. Lower precision reduces those costs but can reduce capability.

Use quantization to fit the model to your hardware: smaller GGUF builds help you run locally, but they are a deployment compromise rather than a free upgrade.

Gemma 4 PyTorch Guide

Run Gemma 4 from a PyTorch-first stack

The fastest Python path for Gemma 4 is Hugging Face Transformers on top of PyTorch: install torch and transformers, pick a Gemma 4 model ID, and begin with pipeline-based text inference before moving into multimodal or tool-enabled workflows.

Install the runtime

Google's Gemma 4 text inference guide starts with torch, accelerate, and transformers, plus dialog for conversation handling.

Pick an official Gemma 4 checkpoint

Google's Gemma 4 examples show four official instruction-tuned IDs: google/gemma-4-E2B-it, google/gemma-4-E4B-it, google/gemma-4-26B-A4B-it, and google/gemma-4-31B-it.

Start with text generation

Use transformers.pipeline with task="text-generation", device_map="auto", and dtype="auto" as the quickest way to get a first response.

Move to multimodal and tools when needed

For multimodal and function-calling workflows, use AutoProcessor and AutoModelForMultimodalLM with apply_chat_template for tool-aware prompting.

Use native PyTorch for deeper control

Google's PyTorch guide documents Kaggle credential setup, dependency installation, cloning gemma_pytorch, and loading multimodal model classes for experimentation with direct checkpoint control.

Gemma 4 Mobile Deployment

Put Gemma 4 on mobile through the current Android stack

Gemma 4 now has three practical mobile-facing paths: ML Kit Prompt API on AICore preview devices, Android Studio local-model workflows for developer-side usage, and LiteRT-LM for lower-level runtime control across mobile and embedded devices.

Choose the path that matches your goal

Use ML Kit Prompt API on AICore if you are building an Android app experience, Android Studio local models if you want offline coding help, and LiteRT-LM if you need lower-level runtime control.

Prototype on-device with AICore

Google's April 2026 preview lets you target Gemma 4 E2B or E4B through model preference settings inside the Prompt API flow on AICore-enabled devices.

Know the device expectations

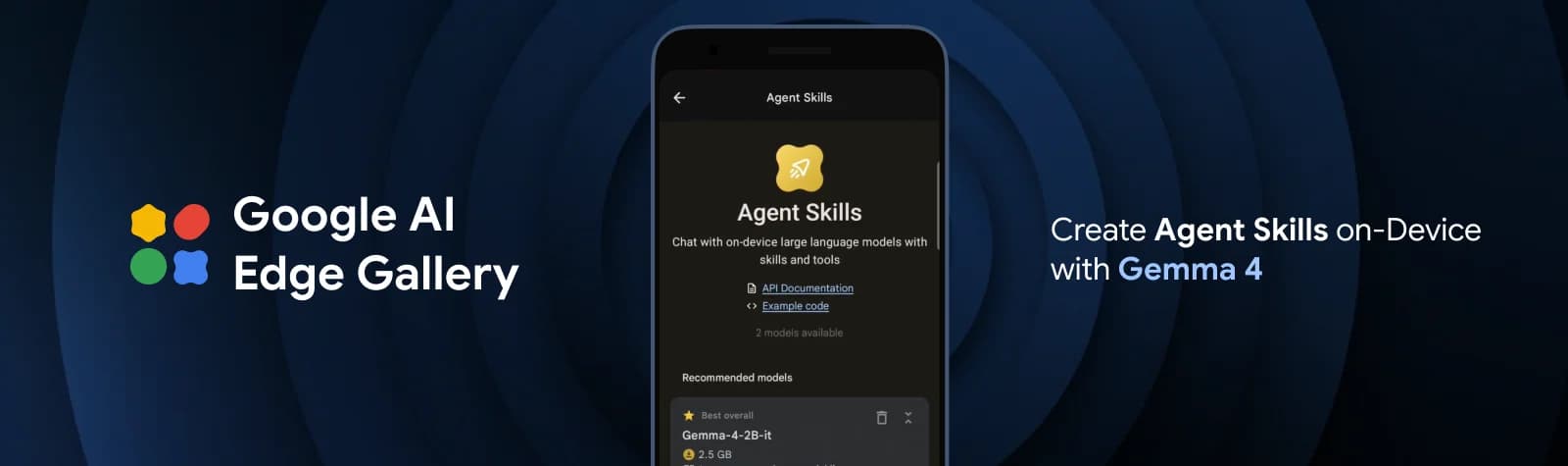

Preview models run on AICore-enabled devices and the latest AI accelerators from Google, MediaTek, and Qualcomm. AI Edge Gallery is available for quick model checks on non-AICore devices.

Use Android Studio for developer-side workflows

Android Studio currently recommends Gemma 4 as its local model option. Gemma E4B requires 12 GB RAM and 4 GB storage; Gemma 26B MoE requires 24 GB RAM and 17 GB storage.

Switch to LiteRT-LM for deeper runtime control

LiteRT-LM is a cross-platform library for language model pipelines from phones to embedded systems, with CPU, GPU, and NPU paths including Qualcomm AI Engine Direct and MediaTek NeuroPilot.

Gemma 4 vs Gemma 3

See what actually changes when you move from Gemma 3 to Gemma 4

This comparison is for developers deciding whether to keep an existing Gemma 3 workflow or rebuild around Gemma 4. The clearest differences show up in context length, control format, multimodal scope, and benchmark performance at the top end of each family.

Release and core sizes

Gemma 4 trims the family around clearer deployment tiers: edge-first E-models plus larger workstation-class models.

Context window

For long documents, tool traces, or multi-step history, Gemma 4's larger models open significantly more headroom.

Multimodality

Gemma 4 is the broader multimodal family if your use case moves beyond image-text into video, OCR-heavy flows, or audio-capable edge models.

Prompt and control format

Teams building agents or structured workflows get a cleaner control surface in Gemma 4.

Top-end benchmark snapshot

If upgrading for reasoning, coding, or high-difficulty QA, the top-end Gemma 4 jump is large enough to justify a migration.

Deployment profile

Stay on Gemma 3 when small classic sizes already fit your stack; move to Gemma 4 when you want newer control features, larger-context top models, or stronger edge-oriented variants.